Blog #1011: Final Thoughts

Today was the last seminar of the year. First off, I’d like to thank my wonderful professors and peers; without them, I wouldn’t have learned so much. Whether it was agreeing vehemently regarding the recent political circumstances or arguing about what constitutes what “singularity” really entails, every discussion was an opportunity for me to better understand the Internet and the future of technology.

Now…onto the seminar itself. I found that one of the most interesting parts of today’s discussion was about Facebook Basics. For those who don’t necessarily know, Basics is an initiative that would make certain websites accessible to many people across the globe. We had quite the heated discussion during our seminar today about whether it would be a good idea.

On one hand, there’s the argument that Basics goes directly against the philosophy of net neutrality. Because it would only allow people to access a limited variety of websites, it would be inherently restricting the knowledge that users could gain.

One the other hand, isn’t some information better than no information? One of my classmates made an analogy to sweatshops; if someone’s starving, then isn’t providing them with a factory job with harsh conditions still better? Unfortunately, this caused more controversy.

Personally, I think that Basics is a great idea. Unlike sweatshops, I don’t think that limited internet connectivity is inherently detrimental to one’s quality of life. If the websites were selected by the government, then brainwashing would be a viable threat. But Facebook is the one establishing what websites can be accessed. And I don’t think AccuWeather is about to be the next threat for brainwashing people.

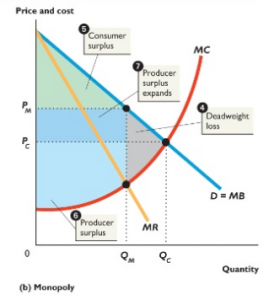

BUT there are other concerns, as addressed in this article I found. It’s important to recognize that Basics is not a charity; as the article points out, there are obviously commercial interests. Thus, Basics could disrupt the market as we know it now. Thus, I think it’s imperative to examine the potential repercussions of Basics more before actually implementing it. In general, I think that thinking ahead in technology is so, so important, and I hope that as I try to join the innovating global community, I’ll keep that in mind. This seminar has really ingrained that in me, and I really appreciate that. Þ

Again, thank you for a wonderful semester! Þ