Can gerrymandering be solved with cut-and-choose?

October 28th, 2013

Update March 25, 2019: Wesley Pegden, Ariel D. Procaccia, and Dingli Yu have an elegant working out of the proposal below that they call “I cut, you freeze.” Pegden and Procaccia describe it in a Washington Post opinion piece.

|

| …how to split a cupcake… “Halves” image by flickr user Julie Remizova. |

Why is gerrymandering even possible in a country with a constitutional right to equal protection?:

No State shall make or enforce any law which shall…deny to any person within its jurisdiction the equal protection of the laws.

By reshaping districts to eliminate the voting power of particular individuals, as modern district mapping software allows, some persons are being denied equal protection, I’d have thought. And so have certain Supreme Court justices.

It’s hard to know what to do about the problem. Appeals to fairness aren’t particularly helpful, since who decides what’s fair? It would be nice to think that requirements of “compact districts of contiguous territory” (as Chief Justice Harlan put it) would be sufficient. But this reduces the problem of districting to a mathematical optimization problem; James Case proposes something like minimum isoperimetric quotient tessellation of a polygon. But such purely mathematical approaches may yield results that violate our intuitions about what is fair. They ignore other criteria, such as “natural or historical boundary lines”, determined for instance by geographical features like rivers and mountains or shared community interests. These boundaries may not coincide with the mathematical optima, so any mathematical formulation would need to be defeasible to take into account such features. This leads us right back to how to decide in which cases the mathematical formulation should be adjusted: who should decide what is fair?

A comment at a ProPublica article about gerrymandering from “damien” caught my attention as a nice way out of this quandary. In essence, he proposes that the parties themselves choose what’s fair.

The first solution to gerrymandering is to have a fitness measure for a proposed districting (e.g. the sum of the perimeters), and then to allow any individual or organisation to propose a districting, with the winner having the best fitness value.

What “damien” is proposing, I take it, is the application of an algorithm somewhat like one familiar from computer science (especially cryptography) and grade school cafeterias known as “cut and choose”. How do you decide how to split a cupcake between two kids? One cuts; the other chooses. The elegance of cut-and-choose is that it harmonizes the incentives of the two parties. The cutter is incentivized to split equally, since the chooser can punish inequity.

Cut-and-choose is asymmetrical; the two participants have different roles. A symmetrical variant has each participant propose a cut and an objective third party selecting whichever is better according to the pertinent objective measure. This variant shares the benefit that each participant has an incentive to be more nearly equal than the other. If Alice proposes a cut that gives her 60% of the cupcake and Bob 40%, she risks Bob proposing a better split that gives her only 45% with him taking the remaining 55%. To avoid getting taken advantage of, her best bet is to propose a split as nearly equal as possible.

In the anti-gerrymandering application of the idea, the two parties propose districtings, which they could gerrymander however they wanted. Whichever of the two proposals has the lower objective function (lower isoperimetric quotient, say) is chosen. Thus, if one party gerrymanders too much, their districting will be dropped in favor of the other party’s proposal. Each party has an incentive to hew relatively close to a compact partition, while being allowed to deviate in appropriate cases.

A nice property of this approach is that the optimization problem doesn’t ever need to be solved. All that is required is the evaluation of the objective function for the two proposed districtings, which is computationally far simpler. (In fact, I’d guess the minimum isoperimetric quotient optimization problem might well be NP-hard.)

There are problems of course. The procedure is subject to gaming when the proposal-generating process is not private to the parties. It is unclear how to extend the method to more than two parties. Of course, the obvious generalization works once the eligible parties are determined. The hard part is deciding what parties are eligible to propose a redistricting. Most critically, the method is subject to collusion, especially in cases where both parties benefit from gerrymandering. In particular, both parties benefit from a districting that protects incumbencies for both parties. The parties could agree, for instance, not to disturb each other’s safe districts, and would benefit from observing the agreement.

Nonetheless, once districting is thought of in terms of mechanism design, the full range of previous algorithms can be explored. Somewhere in the previous literature there might be a useful solution. (Indeed, the proposal here is essentially the first step in Brams, Jones, and Klamler’s surplus procedure for cake-cutting.)

Of course, as with many current political problems (campaign financing being the clearest example), the big question is how such new mechanisms would be instituted, given that it is not in the incumbent majority party’s interest to do so. Until that’s sorted out, I’m not holding out much hope.

Aaron Swartz’s legacy

January 13th, 2013

Government zealotry in prosecuting brilliant people is a repeating theme. It gave rise to one of the great intellectual tragedies of the 20th century, the death of Alan Turing after his appalling treatment by the British government. Sadly, we have just been presented with another case. Aaron Swartz committed suicide at his apartment in New York this week in the face of an overreaching prosecution of his JSTOR download action. I never met him, but I understand from those who knew him well that he was a brilliant, committed person who only acted intending to do good in the world. I’m on the record disagreeing with the particulars of the open access tactic for which he was being prosecuted, on the basis that it was counterproductive. But I empathize with the gut instinct that led to his effort. I hope that it will inspire us all to redouble our efforts to eliminate the needless restraints on the distribution and use of scholarship as Swartz himself was trying to achieve.

For Ada Lovelace Day 2012: Karen Spärck Jones

October 16th, 2012

|

| Karen Spärck Jones, 1935-2007 |

In honor of Ada Lovelace Day 2012, I write about the only female winner of the Lovelace Medal awarded by the British Computer Society for “individuals who have made an outstanding contribution to the understanding or advancement of Computing”. Karen Spärck Jones was the 2007 winner of the medal, awarded shortly before her death. She also happened to be a leader in my own field of computational linguistics, a past president of the Association for Computational Linguistics. Because we shared a research field, I had the honor of knowing Karen and the pleasure of meeting her on many occasions at ACL meetings.

One of her most notable contributions to the field of information retrieval was the idea of inverse document frequency. Well before search engines were a “thing”, Karen was among the leaders in figuring out how such systems should work. Already in the 1960’s there had arisen the idea of keyword searching within sets of documents, and the notion that the more “hits” a document receives, the higher ranked it should be. Karen noted in her seminal 1972 paper “A statistical interpretation of term specificity and its application in retrieval” that not all hits should be weighted equally. For terms that are broadly distributed throughout the corpus, their occurrence in a particular document is less telling than occurrence of terms that occur in few documents. She proposed weighting each term by its “inverse document frequency” (IDF), which she defined as log(N/(n + 1)) where N is the number of documents and n the number of documents containing the keyword under consideration. When the keyword occurs in all documents, IDF approaches 1 for large N, but as the keyword occurs in fewer and fewer documents (making it a more specific and presumably more important keyword), IDF rises. The two notions of weighting (frequency of occurrence of the keyword together with its specificity as measured by inverse document frequency) are combined multiplicatively in the by now standard tf*idf metric; tf*idf or its successors underlie essentially all information retrieval systems in use today.

In Karen’s interview for the Lovelace Medal, she opined that “Computing is too important to be left to men.” Ada Lovelace would have agreed.

Talmud and the Turing Test

June 16th, 2012

|

| …the Golem… Image of the statue of the Golem of Prague at the entrance to the Jewish Quarter of Prague by flickr user D_P_R. Used by permission (CC-BY 2.0). |

Alan Turing, the patron saint of computer science, was born 100 years ago this week (June 23). I’ll be attending the Turing Centenary Conference at University of Cambridge this week, and am honored to be giving an invited talk on “The Utility of the Turing Test”. The Turing Test was Alan Turing’s proposal for an appropriate criterion to attribute intelligence (that is, capacity for thinking) to a machine: you verify through blinded interactions that the machine has verbal behavior indistinguishable from a person.

In preparation for the talk, I’ve been looking at the early history of the premise behind the Turing Test, that language plays a special role in distinguishing thinking from nonthinking beings. I had thought it was an Enlightenment idea, that until the technological advances of the 16th and 17th centuries, especially clockwork mechanisms, the whole question of thinking machines would never have entertained substantive discussion. As I wrote earlier,

Clockwork automata provided a foundation on which one could imagine a living machine, perhaps even a thinking one. In the midst of the seventeenth-century explosion in mechanical engineering, the issue of the mechanical nature of life and thought is found in the philosophy of Descartes; the existence of sophisticated automata made credible Descartes’s doctrine of the (beast-machine), that animals were machines. His argument for the doctrine incorporated the first indistinguishability test between human and machine, the first Turing test, so to speak.

But I’ve seen occasional claims here and there that there is in fact a Talmudic basis to the Turing Test. Could this be true? Was the Turing Test presaged, not by centuries, but by millennia?

Uniformly, the evidence for Talmudic discussion of the Turing Test is a single quote from Sanhedrin 65b.

Rava said: If the righteous wished, they could create a world, for it is written, “Your iniquities have been a barrier between you and your God.” For Rava created a man and sent him to R. Zeira. The Rabbi spoke to him but he did not answer. Then he said: “You are [coming] from the pietists: Return to your dust.”

Rava creates a Golem, an artificial man, but Rabbi Zeira recognizes it as nonhuman by its lack of language and returns it to the dust from which it was created.

This story certainly describes the use of language to unmask an artificial human. But is it a Turing Test precursor?

It depends on what one thinks are the defining aspects of the Turing Test. I take the central point of the Turing Test to be a criterion for attributing intelligence. The title of Turing’s seminal Mind article is “Computing Machinery and Intelligence”, wherein he addresses the question “Can machines think?”. Crucially, the question is whether the “test” being administered by Rabbi Zeira is testing the Golem for thinking, or for something else.

There is no question that verbal behavior can be used to test for many things that are irrelevant to the issues of the Turing Test. We can go much earlier than the Mishnah to find examples. In Judges 12:5–6 (King James Version)

5 And the Gileadites took the passages of Jordan before the Ephraimites: and it was so, that when those Ephraimites which were escaped said, Let me go over; that the men of Gilead said unto him, Art thou an Ephraimite? If he said, Nay;

6 Then said they unto him, Say now Shibboleth: and he said Sibboleth: for he could not frame to pronounce it right. Then they took him, and slew him at the passages of Jordan: and there fell at that time of the Ephraimites forty and two thousand.

The Gileadites use verbal indistinguishability (of the pronounciation of the original shibboleth) to unmask the Ephraimites. But they aren’t executing a Turing Test. They aren’t testing for thinking but rather for membership in a warring group.

What is Rabbi Zeira testing for? I’m no Talmudic scholar, so I defer to the experts. My understanding is that the Golem’s lack of language indicated not its own deficiency per se, but the deficiency of its creators. The Golem is imperfect in not using language, a sure sign that it was created by pietistic kabbalists who themselves are without sufficient purity.

Talmudic scholars note that the deficiency the Golem exhibits is intrinsically tied to the method by which the Golem is created: language. The kabbalistic incantations that ostensibly vivify the Golem were generated by mathematical combinations of the letters of the Hebrew alphabet. Contemporaneous understanding of the Golem’s lack of speech was connected to this completely formal method of kabbalistic letter magic: “The silent Golem is, prima facie, a foil to the recitations involved in the process of his creation.” (Idel, 1990, pages 264–5) The imperfection demonstrated by the Golem’s lack of language is not its inability to think, but its inability to wield the powers of language manifest in Torah, in prayer, in the creative power of the kabbalist incantations that gave rise to the Golem itself.

Only much later does interpretation start connecting language use in the Golem to soul, that is, to an internal flaw: “However, in the medieval period, the absence of speech is related to what was conceived then to be the highest human faculty: reason according to some writers, or the highest spirit, Neshamah, according to others.” (Idel, 1990, page 266, emphasis added)

By the 17th century, the time was ripe for consideration of whether nonhumans had a rational soul, and how one could tell. Descartes’s observations on the special role of language then serve as the true precursor to the Turing Test. Unlike the sole Talmudic reference, Descartes discusses the connection between language and thinking in detail and in several places — the Discourse on the Method, the Letter to the Marquess of Newcastle — and his followers — Cordemoy, La Mettrie — pick up on it as well. By Turing’s time, it is a natural notion, and one that Turing operationalizes for the first time in his Test.

The test of the Golem in the Sanhedrin story differs from the Turing Test in several ways. There is no discussion that the quality of language use was important (merely its existence), no mention of indistinguishability of language use (but Descartes didn’t either), and certainly no consideration of Turing’s idea of blinded controls. But the real point is that at heart the Golem test was not originally a test for the intelligence of the Golem at all, but of the purity of its creators.

References

Idel, Moshe. 1990. Golem: Jewish magical and mystical traditions on the artificial anthropoid, Albany, N.Y.: State University of New York Press.

Processing special collections: An archivist’s workstation

May 29th, 2012

|

| John Tenniel, c. 1864. Study for illustration to Alice’s adventures in wonderland. Harcourt Amory collection of Lewis Carroll, Houghton Library, Harvard University. |

We’ve just completed spring semester during which I taught a system design course jointly in Engineering Sciences and Computer Science. The aim of ES96/CS96 is to help the students learn about the process of solving complex, real-world problems — applying engineering and computational design skills — by undertaking an extended, focused effort directed toward an open-ended problem defined by an interested “client”.

The students work independently as a self-directed team. The instructional staff provides coaching, but the students do all of the organization and carrying out of the work, from fact-finding to design to development to presentation of their findings.

This term the problem to be addressed concerned the Harvard Library’s exceptional special collections, vast holdings of rare books, archives, manuscripts, personal documents, and other materials that the library stewards. Harvard’s special collections are unique and invaluable, but are useful only insofar as potential users of the material can find and gain access to them. Despite herculean efforts of an outstanding staff of archivists, the scope of the collections means that large portions are not catalogued, or catalogued in insufficient detail, making materials essentially unavailable for research. And this problem is growing as the cataloging backlog mounts. The students were asked to address core questions about this valuable resource: What accounts for this problem at its core? Can tools from computer science and technology help address the problems? Can they even qualitatively improve the utility of the special collections?

The students’ recommendations centered around the design, development, and prototyping of an “archivist’s workstation” and the unconventional “flipped” collections processing that the workstation enabled. Their process involves exhaustive but lightweight digitization of a collection as a precursor to highly efficient metadata acquisition on top of the digitized images, rather than the conventional approach of digitizing selectively only after all processing of the collection is performed. The “digitize first” approach means that documents need only be touched once, with all of the sorting, arrangement, and metadata application being performed virtually using optimized user interfaces that they designed for these purposes. The output is a dynamic finding aid with images of all documents, complete with search and faceted browsing of the collection, to supplement the static finding aid of traditional archival processing. The students estimate that processing in this way would be faster than current methods, while delivering a superior result. Their demo video (below) gives a nice overview of the idea.

The deliverables for the course are now available at the course web site, including the final report and a videotape of their final presentation before dozens of Harvard archivists, librarians, and other members of the community.

I hope others find the ideas that the students developed as provocative and exciting as I do. I’m continuing to work with some of them over the summer and perhaps beyond, so comments are greatly appreciated.

An efficient journal

March 6th, 2012

|

| “You seem to believe in fairies.” Photo of the Cottingley Fairies, 1917, by Elsie Wright via Wikipedia. |

Aficionados of open access should know about the Journal of Machine Learning Research (JMLR), an open-access journal in my own research field of artificial intelligence, a subfield of computer science concerned with the computational implementation and understanding of behaviors that in humans are considered intelligent. The journal became the topic of some dispute in a conversation that took place a few months ago in the comment stream of the Scholarly Kitchen blog between computer science professor Yann LeCun and scholarly journal publisher Kent Anderson, with LeCun stating that “The best publications in my field are not only open access, but completely free to the readers and to the authors.” He used JMLR as the exemplar. Anderson expressed incredulity:

I’m not entirely clear how JMLR is supported, but there is financial and infrastructure support going on, most likely from MIT. The servers are not “marginal cost = 0” — as a computer scientist, you surely understand the 20-25% annual maintenance costs for computer systems (upgrades, repairs, expansion, updates). MIT is probably footing the bill for this. The journal has a 27% acceptance rate, so there is definitely a selection process going on. There is an EIC, a managing editor, and a production editor, all likely paid positions. There is a Webmaster. I think your understanding of JMLR’s financing is only slightly worse than mine — I don’t understand how it’s financed, but I know it’s financed somehow. You seem to believe in fairies.

Since I have some pretty substantial knowledge of JMLR and how it works, I thought I’d comment on the facts of the matter. Read the rest of this entry »

Tales of peer review, episode 1: Boyer and Moore’s MJRTY algorithm

September 23rd, 2011

I’m generally a big fan of peer review. I think it plays an important role in the improvement and “chromatography” of the scholarly literature. But sometimes. Sometimes.

|

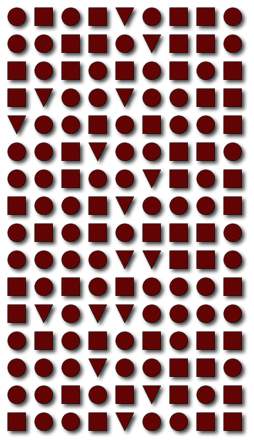

| The Boyer-Moore MJRTY algorithm allows efficient determination of which shape (triangle, circle, square) is in the majority without counting each shape. |

This past week I was reading Robert Boyer and J Strother Moore‘s paper on computing the majority element of a multiset, which presents a very clever simple algorithm for this fundamental problem and a description of a mechanical proof of its correctness. The authors aptly consider the work a “minor landmark in the development of formal verification and automated reasoning”.

Below is the postscript to that paper, in its entirety, which describes the history of the paper including how and why it was “repeatedly rejected for publication”. (It was eventually published as a chapter in a 1991 festschrift for Woody Bledsoe, ten years after it was written, and is now also available from Moore’s website.)

In this paper we have described a linear time majority vote algorithm and discussed the mechanically checked correctness proof of a Fortran implementation of it. This work has a rather convoluted history which we would here like to clarify.

The algorithm described here was invented in 1980 while we worked at SRI International. A colleague at SRI, working on fault tolerance, was trying to specify some algorithms using the logic supported by “Boyer-Moore Theorem Prover.” He asked us for an elegant definition within that logic of the notion of the majority element of a list. Our answer to this challenge was the recursive expression of the algorithm described here.

In late 1980, we wrote a Fortran version of the algorithm and proved it correct mechanically. In February, 1981, we wrote this paper, describing that work. In our minds the paper was noteworthy because it simultaneously announced an interesting new algorithm and offered a mechanically checked correctness proof. We submitted the paper for publication.

In 1981 we moved to the University of Texas. Jay Misra, a colleague at UT, heard our presentation of the algorithm to an NSF site-visit team. According to Misra (private communication, 1990): “I wondered how to generalize [the algorithm] to detect elements that occur more than n/k times, for all k, k ≥ 2. I developed algorithm 2 [given in Section 3 of [9]] which is directly inspired by your algorithm. Also, I showed that this algorithm is optimal [Section 5, op. cit.]. On a visit to Cornell, I showed all this to David Gries; he was inspired enough to contribute algorithm 1 [Section 2, op. cit.].” In 1982, Misra and Gries published their work [9], citing our technical report appropriately as “submitted for publication.”

However, our paper was repeatedly rejected for publication, largely because of its emphasis on Fortran and mechanical verification. A rewritten version emphasizing the algorithm itself was rejected on the grounds that the work was superceded by the paper of Misra and Gries!

When we were invited to contribute to the Bledsoe festschrift we decided to use the opportunity to put our original paper into the literature. We still think of this as a minor landmark in the development of formal verification and automated reasoning: here for the first time a new algorithm is presented along with its mechanically checked correctness proof—eleven years after the work.

I have to think the world would have been better off if Boyer and Moore had just posted the paper to the web in 1981 and been done with it. Unfortunately, the web hadn’t been developed yet.

Happy Ada Lovelace Day

March 24th, 2010

|

| Fragment of Charles Babbage’s first difference engine, from the collection of the Harvard University Collection of Historical Scientific Instruments. |

In honor of Ada Lovelace Day, here is a fragment of Charles Babbage’s difference engine, from the Collection of Historical Instruments at Harvard University. Babbage went on to design a programmable computer, the Analytical Engine, though it was never built. However, Augustus Ada King, Countess of Lovelace, in her 1843 translation, annotation, and augmentation of a French description of the machine, provided an algorithm for computing the Bernoulli numbers, which is arguably the first algorithm designed for computer implementation. She is consequently considered the world’s first computer programmer.

UPDATE (May 2, 2010): Bruce Beresford will apparently be directing a movie about Ada Lovelace entitled “The Enchantress of Numbers”, starring Billy Crudup as Charles Babbage and perhaps Zooey Deschanel (or not) as the Countess herself. A way cooler computer science film, honestly, than a movie about Facebook.

Britain apologizes for treatment of Alan Turing

September 13th, 2009

- Image by Whimsical Chris via Flickr

Prime Minister Gordon Brown has apologized on behalf of the British government for the appalling treatment of Alan Turing, who was obliged to undergo chemical castration for the crime of being gay. Prime Minister Brown’s statement in the Telegraph follows an online petition drive that enlisted over 30,000 British citizens and residents, and a follow-on global petition with over 10,000 signatories worldwide.

Much has been made in the discussions surrounding the petition efforts and in the Prime Minister’s statement of Turing’s code-breaking efforts at Bletchley Park, which directly contributed to the allied victory in World War II. Less mentioned, but also central to his legacy, are Turing’s seminal contributions to computer science. It is no exaggeration to say that Alan Turing was the progenitor of computer science, in his brief career providing building the foundation of theory, hardware, systems, artificial intelligence, even computational biology. His death at 42 as a result of the British government’s misguided “therapy” constitutes one of the great intellectual tragedies of the twentieth century. I commend Prime Minister Brown for his prompt and complete apology.

“Don’t ask, don’t tell” rights retention for scholarly articles

June 18th, 2009

A strange social contract has arisen in the scholarly publishing field, a kind of “don’t ask, don’t tell” approach to online distribution of articles by authors. Publishers officially forbid online distribution, authors do it anyway without telling the publishers, and publishers don’t ask them to stop even though it violates contractual obligations. What happens when you refuse to play that game? Read on.

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=1496383c-4e54-4066-983a-e0e9239c7223)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=c7b7195f-016a-4bbb-9936-d75ce06ff5a3)